|

|

|

|

|

|

| [GitHub] |

|

|

Citation |

|

@inproceedings{du2021curious,

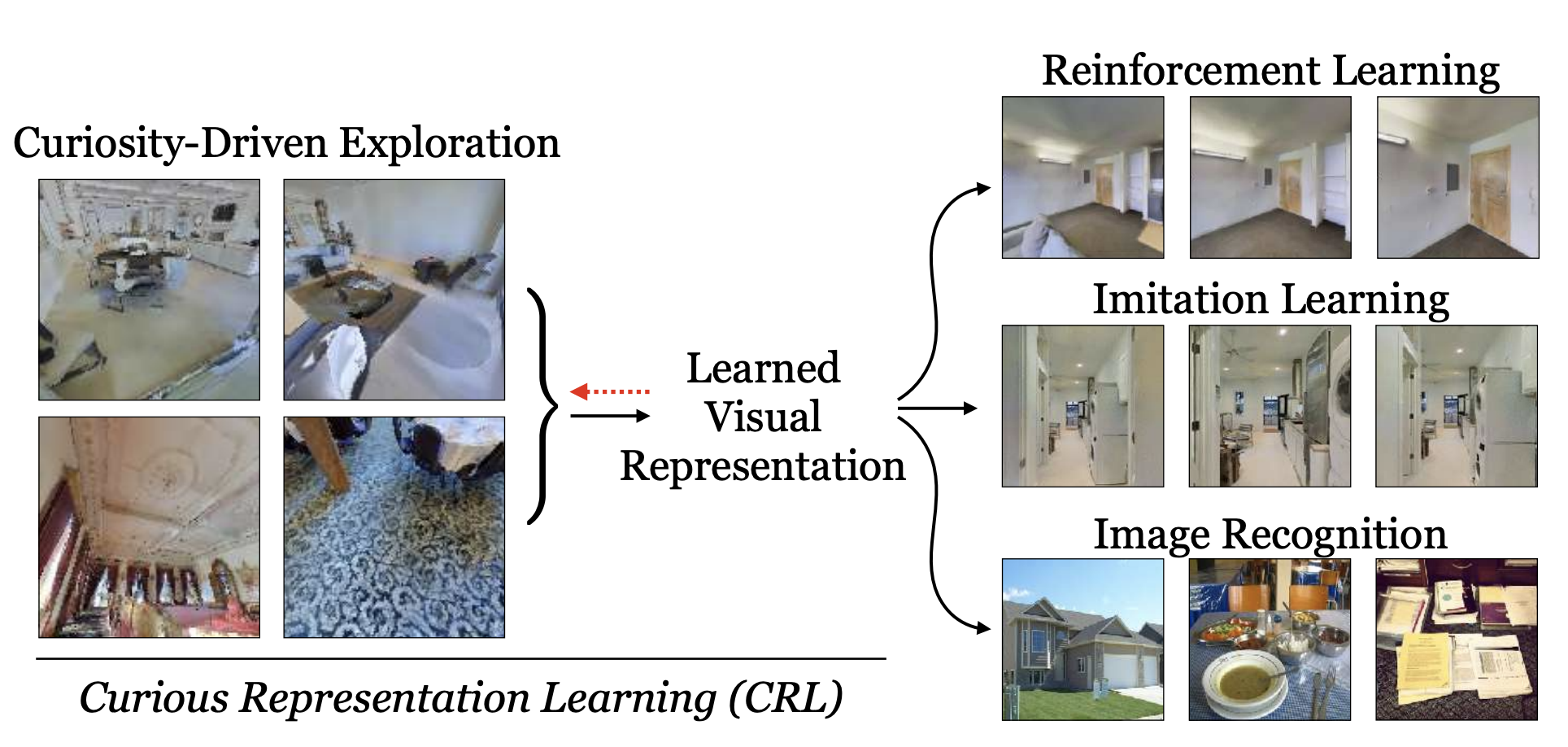

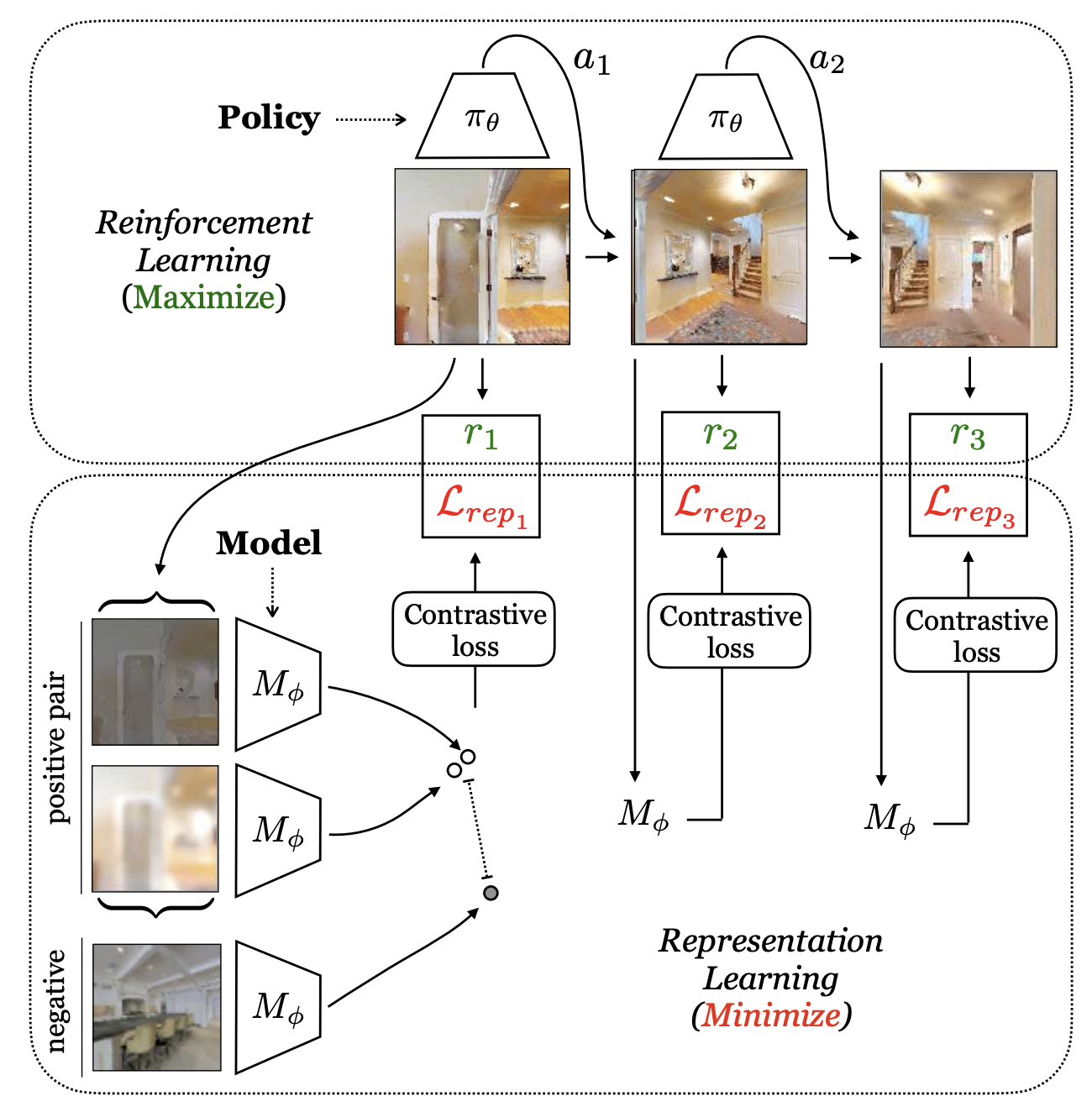

title={Curious representation learning for embodied intelligence},

author={Du, Yilun and Gan, Chuang and Isola, Phillip},

booktitle={Proceedings of the IEEE/CVF International Conference on Computer Vision},

pages={10408--10417},

year={2021}

}

|

Acknowledgements |